- Afhalen na 1 uur in een winkel met voorraad

- Gratis thuislevering in België vanaf € 30

- Ruim aanbod met 7 miljoen producten

- Afhalen na 1 uur in een winkel met voorraad

- Gratis thuislevering in België vanaf € 30

- Ruim aanbod met 7 miljoen producten

Zoeken

€ 194,95

+ 389 punten

Omschrijving

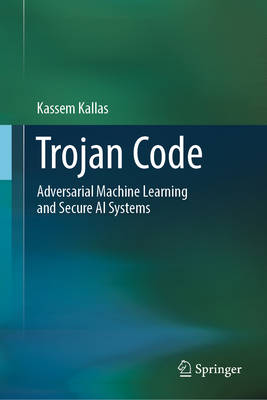

This book provides a comprehensive and accessible guide to the rapidly growing field of AI security, addressing the threats, vulnerabilities, and defensive strategies that shape modern machine-learning systems. The book examines how adversaries exploit poisoned data, hidden triggers, model theft, and privacy leakage to compromise AI, and explains why securing learning systems requires approaches fundamentally different from traditional cybersecurity. Across four structured parts, it maps the threat landscape, dissects backdoor attacks, develops defensive and game-theoretic frameworks, and introduces robust watermarking methods for protecting AI intellectual property. Drawing from real-world case studies in healthcare, finance, autonomous systems, and defense, the book translates academic research into practical insights for evaluating risk, designing resilient models, and understanding the economic and operational impact of AI breaches. Its coverage extends from adversarial examples and federated learning sabotage to ownership verification and governance-aware design. Designed for researchers, engineers, graduate students, and institutional decision-makers, this book serves both as a technical reference and a strategic resource for organizations deploying AI in mission-critical environments. It equips readers with the knowledge needed to anticipate emerging threats and to build AI systems that are not only powerful and efficient, but secure, trustworthy, and resilient by design.

Specificaties

Betrokkenen

- Auteur(s):

- Uitgeverij:

Inhoud

- Aantal bladzijden:

- 390

- Taal:

- Engels

Eigenschappen

- Productcode (EAN):

- 9783032245212

- Verschijningsdatum:

- 08/08/2026

- Uitvoering:

- Hardcover

- Formaat:

- Genaaid

- Afmetingen:

- 155 mm x 235 mm

Alleen bij Standaard Boekhandel

+ 389 punten op je klantenkaart van Standaard Boekhandel

Beoordelingen

We publiceren alleen reviews die voldoen aan de voorwaarden voor reviews. Bekijk onze voorwaarden voor reviews.